This article is a bit different from most of my site: My other articles generally discuss specific vendors, their practices, and how they cause harm. This article offers a possible solution — from a company that, let me say at the outset, has invited me to join its advisory board. They didn’t ask me to write this; I’m writing on my own. And they don’t control me or what I write. But for those not interested in a commercial service that may help protect users from spyware, please read no further.

Much of the spyware problem results from users visiting sites that turn out to be untrustworthy or simply malevolent. I’m certainly not inclined to blame the victimized users — it’s hardly their fault that sites run security exploits, offer undisclosed advertising software, or show tricky EULAs that are dozens of pages long. But the resulting software ultimately ends up on users’ computers because users browsed to sites that didn’t pan out.

How to fix this problem? In theory, it seems easy enough. First, someone needs to examine popular web sites, to figure out which are untrustworthy. Then users’ computers need to automatically notify them — warn them! — before users reach untrustworthy sites. These aren’t new ideas. Indeed, half a dozen vendors have tried such strategies in the past. But for various reasons, their efforts never solved the problem. (Details below).

This month, a new company is announcing a system to protect users from untrustworthy web sites: SiteAdvisor. They’ve designed a set of robots — automated web crawlers, virtual machines, and databases — that have browsed hundreds of thousands of web sites. They’ve tracked which sites install spyware — what files installed, what registry changes, what network traffic. And they’ve built a browser plug-in that provides automated notification of worrisome sites — handy red balloons when users stray into risky areas, along with annotations on search result pages at leading search engines.

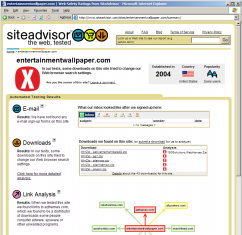

I’ve long known that the best way to assess a web site’s trustworthiness is to examine and test the site. In general that’s remarkably time-consuming — requiring at least a few minutes of time, of a high-skill human researcher. But a tester is inevitably looking for a few basic characteristics. Does the site offer programs for download? If it does, do those programs come with bundled adware or spyware? In principle this is work better suited to a robot — a system that can perform tests around the clock, with full automation, in massive parallel, at far lower cost than a human staff person. SiteAdvisor has built such robots, and they’re running even as I write this. The results are impressive. See an example report.

Of course automated testing of web sites can find more than just spyware. What about spam? Whenever I see a web form that requests my email address, I always worry: Will the web site send me spam? Or sell my name to spammers? As with spyware, it’s a problem of trust. And it’s a problem SiteAdvisor can investigate. Fill out hundreds of thousands of forms, putting a different email address into each. Wait a few months and see which addresses get spam. Case closed.

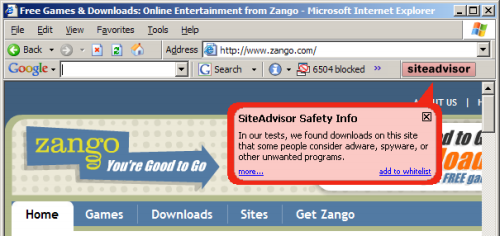

To provide users with timely information about who to trust, SiteAdvisor has to put a plug-in into users’ browsers. In general I’m no fan of browser plug-ins; most plug-ins serve marketing companies’ interests (i.e. by showing ads) rather than actually helping users. But at just 92 pixels in width, SiteAdvisor’s plug-in is remarkably unobtrusive. I run it on my main PC, and it shares space otherwise left vacant by the Google Toolbar (the only other browser plug-in I accept). See first screenshot below, showing SiteAdvisor in action.

Of course there’s more to SiteAdvisor than just these pop-up balloons. If a user clicks “More” in a warning balloon, or otherwise searches the SiteAdvisor site, SiteAdvisor gives detailed information about the risks it found. These detailed “dossiers” report what downloads a site offers (and what software they bundle), as well as links to other sites (potentially hostile or tricky), emails (potential spam), and other areas of possible concern. See right image above, and additional screenshots.

My Role in SiteAdvisor – and How Others Can Help

I’ve been excited about SiteAdvisor — about their product, their technology, and (most importantly) their ability to help users with a serious problem — ever since I learned about the company. I’m so impressed that I agreed to join the company’s advisory board. I’m not involved in day-to-day operations, so specific suggestions are best sent to SiteAdvisor staff, not to me. That said, my relationship with SiteAdvisor is likely to be longer and deeper than my typical consulting gigs, reflecting the seriousness of my commitment to SiteAdvisor.

It’s not easy to design robots that automatically rate the web, and despite SiteAdvisor’s best efforts, their initial ratings aren’t quite perfect. With that in mind, they’re running a preview program. Interested readers can browse SiteAdvisor’s ratings and flag anything that seems wrong or incomplete. SiteAdvisor’s system anticipates its own fallibility — it offers numerous areas for users to contribute comments. There’s even space for reviewed web sites to comment on their ratings — for example, to explain why they think they’ve been unfairly criticized.

Why get involved? If you think, as I do, that SiteAdvisor will attract a large group of passionate users, then it’s sensible to help improve the reviews these users receive. Also, SiteAdvisor has produced an incredible dataset, which they’ll be sharing under a Creative Commons license. In the coming months, I’ll be using this data for research; I’m anticipating some exciting articles analyzing how and where users get infected with spyware. Meanwhile, preview participants get access to SiteAdvisor’s fascinating dossiers (example) — a great way to track which programs install which spyware.

As I mentioned above, SiteAdvisor isn’t the first group seeking to improve the web by rating web sites. But SiteAdvisor makes major advances over previous efforts.

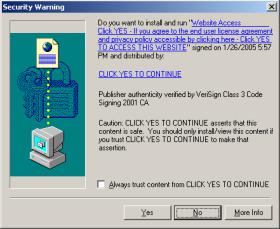

Consider, for example, the code-signing system associated with ActiveX controls. (See example at right.) Anticipating security problems with ActiveX, Microsoft designed IE so that it only shows an ActiveX installation prompt if the ActiveX package is properly signed by an accredited code-signer like (in this example) VeriSign. VeriSign in turn sets criteria on who can receive these certificates. But despite these checks, the system turns out to be woefully insecure. For one, VeriSign wasn’t always tough in limiting who can get its certs. (The cert at right was issued a company calling itself “click yes to continue,” a highly misleading company name. Additional examples.) In addition, VeriSign’s main requirement is that a company provide a verifiable name. A company’s software may be highly objectionable — pop-up ads, privacy violations, spam zombies, you name it — but if the company gives its true name and pays VeriSign $200 to $600, then they’re likely to receive a certificate. After I criticized VeriSign’s cert-issuing practices this spring, VeriSign tightened its processes somewhat, but its Thawte subsidiary continues to issue certificates to companies that users rightly dislike. And other cert-issuers are even worse.

The ActiveX debacle shows at least three problems that can plague a certification system.

1) Certifying the wrong thing. ActiveX code-signing certifies characteristics of lesser concern to typical users. In particular, ActiveX code-signing it certifies that a vendor is who it says it is, and code-signing certifies that the specified vendor really did develop the program being offered. That’s a nice start, but it’s not what most users are most worried about other. Instead, users reasonably want to know: Is this program safe? Will it hurt my computer? As it turns out, a code-signing certificate says nothing about trustworthiness of the underlying code. But seeing the “verified” statement and VeriSign’s well-respected name, users mistakenly think code-signing means a program is sure to be safe.

2) Dependent on payment. I worry about certification businesses that receive payment from the companies being certified. If VeriSign issues a code-signing certificate, it gets paid $200 to $600. If it denies a cert, it gets $0. So it’s no surprise that lots of certificates get issued. I credit VeriSign’s good intentions, on the whole. But VeriSign staff face some odd and troubling incentives as they try to meet their code-signing financial objectives.

3) Complaints. There’s often no clear procedure for users to complain of improperly-issued certificates. I previously noted that VeriSign lacked a formal complaint and investigation process. After my article, VeriSign established a complaint form. But there are no public records of complaints received, of pending complaints, or of complaint dispositions. VeriSign may be doing a great job of handling complaints and of correcting any errors, but the public has no way to know.

Remarkably, these same problems plague other self-styled trust authorities. TRUSTe‘s main seal, its Web Privacy Seal, largely certifies that a web site has a privacy policy and that the site has agreed to resolve disputes in the way that TRUSTe requires. The policy might be highly objectionable and one-sided, but TRUSTe will still issue its seal. From the perspective of typical users, this is a “certifying the wrong thing” problem: Users expect TRUSTe to tell them that a site’s privacy policy is fair and that users can confidently provide personal information to the site, but in fact the certificate implies no such thing. (Indeed, six months after I revealed Direct Revenue, eZula, Hotbar, and Webhancer as TRUSTe certificate-holders, TRUSTe’s member list says all but eZula are all still members in good standing. In addition, these companies are known not for their web sites but for their advertising software — products TRUSTe’s certificate doesn’t cover at all. So TRUSTe’s certification is especially likely to mislead users seeking to evaluate these vendors.) Furthermore, TRUSTe receives much of its funding from the vendors it certifies, raising the worry of financial incentives to issue undeserved certificates. Finally, when I’ve sent complaints to TRUSTe, I haven’t always felt I received a prompt or appropriate response. So in my view TRUSTe suffers the same three problems I flag for the VeriSign/code-signing system.

TrustWatch‘s search engine and toolbar are superficially similar to SiteAdvisor: Both companies offer toolbars that claim to help users stay safe online. But TrustWatch suffers from the same kinds of mistakes described above. TrustWatch generally endorses a site if it has a certificate from GeoTrust, Entrust, TRUSTe, or HackerSafe. These groups vary in their respective policies, but none of them affirmatively checks for the privacy violations, spyware, spam, or other ill effects that users reasonably worry about. Instead, their focus is on SSL certificates — important for some purposes, but peripheral to today’s biggest security problems. Meanwhile, the TrustWatch endorsers charge for their certs — raising the payment problems flagged above. Predictably, TrustWatch’s system yields poor results. For example, TrustWatch certifies 180solutions and Direct Revenue with its highest “verified secure” rating. That’s an endorsement few security experts would share.

At least one certification system (besides SiteAdvisor) seems immune from the problems described above: Stan James‘ Outfoxed provides a non-profit self-organizing assessment of web site trustworthiness, based on recommendations from a web of trusted experts. Because individual users can decide which recommenders to trust, Outfoxed offers the prospect of ratings based on characteristics users actually care about — solving the “wrong thing” problem. Outfoxed doesn’t charge web sites for ratings, and Outfoxed’s relationship-based trust assessments can distribute meaningful feedback to assure rating accuracy. So Outfoxed addresses the problems described above, and I think it reflects a major step forward. That said, as a self-organizing system, Outfoxed needs a critical mass of experts in order to take off. I worry that it might not get there.

Separately, a few security firms have designed automated systems to seek out spyware. See Microsoft’s HoneyMonkeys and Webroot’s Phileas. But these projects only detect exploits. In particular, they don’t identify the social engineering and misleading installations that web users face with increasing regularity.

SiteAdvisor won’t suffer from the three major problems described above. SiteAdvisor tests the specific behaviors most objectionable to typical users — extra pop-up ads, privacy violations, gummed up PCs, and of course spam — and SiteAdvisor doesn’t give a site a green light just because it has an SSL cert or a posted privacy policy. SiteAdvisor won’t issue certifications upon payment of a fee. And in addition to soliciting an abundance of comments, SiteAdvisor promptly and automatically publishes comments for public review. So, though I’ve been critical of other certification systems, I’m truly excited about SiteAdvisor.

TrustWatch certifies 180solutions as a “verified secure” site

TrustWatch certifies 180solutions as a “verified secure” site