In HBS Working Knowledge, I write “Op-Ed: It’s a Bad Idea to Ban Customers from Recording Videos.”

Passenger Right to Record at Airports and on Airplanes? with Mike Borsetti

Passengers have every reason to record airline staff and onboard events–documenting onboard disputes (such as whether a passenger is in fact disruptive or a service animal disobedient), service deficiencies (perhaps a broken seat or inoperational screen), and controversial remarks from airline personnel (like statements of supposed rules, which not match actual contract provisions). For the largest five US airlines, no contract provision–general tariff, conditions of carriage, or fare rules–prohibits such recordings. Yet airline staff widely tell passengers that they may not record–citing “policies” passengers couldn’t reasonably know and certainly didn’t agree to in the usual contract sense. (For example, United’s policy is a web page not mentioned in the online purchase process. American puts its anti-recording policy in its inflight magazine, where passengers only learn it once onboard.) If passengers refuse to comply, airline staff have threatened all manner of sanctions including denial of transport and arrest. In one incident in July 2016, a Delta gate agent even assaulted a 12-year-old passenger who was recording her remarks.

In a Petition for Rulemaking filed this week with the US Department of Transportation, Mike Borsetti and I ask DOT to affirm that passengers have the right to record what they lawfully see and hear on and around aircraft. We explain why such recordings are in the public interest, and we present the troubling experiences of passengers who have tried to record but have been punished for doing so. We conclude with specific proposed provisions to protect passenger rights.

One need not look far to see the impact of passenger recordings. United recently summoned security officers who assaulted passenger David Dao, who had done nothing worse than peacefully remain in the seat he had paid for. The officers falsely claimed that Dao was “swinging his arms up and down with a closed fist,” then “started flailing and fighting” as he was removed. United CEO Oscar Munoz’s falsely claimed that Dao was “disruptive and belligerent”. Fortunately, five passenger recordings provided the crucial proof to rebut those claims. Dao and the interested public are fortunate that video disproved these allegations. But imagine if United had demanded that other passengers onboard turn off their cameras before security officers boarded, or delete their recordings afterward and prove that they had done so — consistent with passenger experiences we report in our Petition for Rulemaking. Had United made such demands, the officers’ false allegations would have gone unchallenged and justice would not have been done. Hence our insistence that recordings are proper even–indeed, especially–without the permission of the airline staff, security officers, and others who are recorded.

Our filing:

Petition for Rulemaking: Passenger Right to Record

DOT docket with public comment submission form

Privacy Puzzles at Google Play

Last week app developer Dan Nolan noticed that Google transaction records were giving him the name, geographic region, and email address of every user who bought an Android app he sold via Google Play. Dan’s bottom line was simple: “Under no circumstances should [a developer] be able to get the information of the people who are buying [his] apps unless [the customers] opt into it and it’s made crystal clear to them that [app developers are] getting this information.” Dan called on Google to cease these data leaks immediately, but Google instead tried to downplay the problem.

In this post, I examine Google’s relevant privacy commitments and argue that Google has promised not to reveal users’ data to developers. I then critique Google’s response and suggest appropriate next steps.

Google’s Android Privacy Promise

In its overarching Google Privacy Policy, Google promises to keep users’ data confidential. Specifically, at the heading “Information we share”, Google promises that “We do not share personal information with companies, organizations and individuals outside of Google unless one of the following circumstances apply”. Google then lists four specific exceptions: “With your consent”, “With domain administrators”, “For external processing”, and “For legal reasons.” None of these exceptions applies: users were never told that their information would be shared (not to mention “consent[ing]” to such sharing; Google shared data with app developers, not domain administrators; app developers do not process data for Google, and the data was never processed nor provided for processing; and no legal reason required the sharing of this information. Under Google’s standard privacy policy, users’ data simply should not have been shared.

Users purchase Android apps via the Google Play service, so Google Play policies also limit how Google may share users’ information. But the Google Play Terms of Service say nothing about Google sharing users’ details with app developers. Quite the contrary, the Play TOS indicate that Google will not share users’ information with app developers. At heading 10, Magazines on Google Play, Google specifically notes the additional “Information Google Shares with Magazine Publishers”: customer “name, email address, [and] mailing address.” But Google makes no special mention of information shared with any other type of Google Play content provider. Notice: Google notes that it shares certain extra information with magazine publishers, but it makes no corresponding mention of sharing with other content providers. The only plausible interpretation is that Google does not share the listed information with any other kind of content provider.

Users’ Google Play purchases are processed via Google Wallet, so Google Wallet policies also limit how Google may share users’ information. But the Google Wallet privacy policy says nothing about Google sharing users’ details with app developers. Indeed, the Google Wallet privacy policy is particularly narrow in its statement of when Google may share users’ personal information:

Information we share

We will only share your personal information with other companies or individuals outside of Google in the following circumstances:

* As permitted under the Google Privacy Policy.

* As necessary to process your transaction and maintain your account.

* To complete your registration for a service provided by a third party.

None of these exceptions applies to Google sharing users’ details with app developers. The preceding analysis confirms that the Google Privacy Policy says nothing of sharing users’ information with app developers, so the first exception is inapplicable. Nolan’s post confirms that app developers do not need users’ details in order to provide the apps users request; Google’s app delivery system already provides apps to authorized users. And app developers need not communicate with users by email, making it unnecessary for Google to provide an app developer with a user’s email address. Google might argue that it could be useful, in certain circumstances, for app developers to be able to email the users who had bought their apps. But this certainly is not “necessary.” Indeed, Nolan had successfully sold apps for months without doing so. The third exception does not apply to any app that does not require “registration” (most do not). Even if we interpret “registration” as installation and perhaps ongoing use, Nolan’s experience confirms why the third exception is also inapplicable: Nolan was easily able to do everything needed to sell and service the apps he sold without ever using users’ email addresses.

Even if it were necessary for Google to let developers contact the users who bought their apps, such communications do not require that Google provide developers with users’ email addresses. Quite the contrary, Google could provide remailer email addresses: a developer would write to an email address that Google specifies, and Google would forward the message as needed. Alternatively, Google could develop an API to let developers contact users. These suggestions are more than speculative: Google Checkout uses exactly the former technique to shield users’ email address from sellers. (From Google’s 2006 announcement: “To provide more control over email spam, Google Checkout lets shoppers choose whether or not to keep email addresses confidential or turnoff unwanted email from the stores where they shop”) Similarly, Google Wallet creates single-use credit card numbers (“Virtual OneTime Card”) to let users make purchases without revealing their payment details developers. Having developed such sophisticated methods to protect user privacy in other contexts, including more complicated contexts, Google cannot credibly argue that it was “necessary” to reveal users’ email addresses to app developers.

Siliconvalley.com quotes an unnamed Google representative defending Google’s approach:

“Google Wallet shares the information necessary to process a transaction, which is clearly spelled out in the Google Wallet Privacy Notice.”

I emphatically disagree. First, it simply is not “necessary” to provide developers with access to customer names or email addresses in order to process customer transactions. Apple has long run a similar but larger app marketplace without doing so. Scores of developers, Nolan among many others, successfully provide apps without using (and often without even knowing they are receiving) customer names and email addresses. To claim that it is “necessary” to provide this information to developers, Google would need to establish that there is truly no alternative — a high bar, which Google has not even attempted to reach.

Second, this data sharing is not “spelled out in the Google Wallet Privacy Notice” and certainly is not “clear[]” there. The preceding section analyzes the relevant section of the Google Wallet privacy policy. Nothing in that document “clearly” states that Google shares users’ email addresses with third-party developers. The only conceivable argument for such sharing is that such sharing is “necessary” — but that immediately returns us to the arguments discussed above.

Google widely promises to protect users’ private information. Google makes these promises equally in mobile. Having promised protection and delivered the opposite, Google should not be surprised to find users angry.

Some app developers defend Google’s decision to provide developers with customer details. For example, the Application Developers Alliance commented that “in the Google Play environment the app purchaser customer data (e.g., name and email address) belongs to the developer who is the seller” — arguing that it is therefore appropriate that Google provide this information to app developers. Barry Schwartz of Marketing Land added “I want to be able to service my customers” and indicated that sharing customer names and emails helps with support and refunds. Perhaps there are good reasons to provide customer data to developers. But these arguments offer no reason why Google did not tell users that their details would be sent to app developers. The Application Developers Alliance suggests that anyone uncertain about data sharing should “carefully review the Google Play and Google Wallet terms of service.” But these documents nowhere state that users’ information is provided to app developers (recall the analysis above), and in fact the documents indicate exactly the opposite.

Meanwhile, sharing users’ details with app developers risks significant harms. Nolan noted two clear problems: First, a developer could use customer contact details to track and harass users who left negative reviews or sought refunds. Second, an attacker could write malware that, running on app developers’ computers, logs into Google’s systems to collect user data. While one might hope app developers would keep their computers secure from such malware, there are tens of thousands of Android developers. Some are bound to have poor security on some local machines. Users’ data shouldn’t be vulnerable to the weakest link among these thousands of developers. An attacker could devise a highly deceptive attack with information about which users bought which apps when — yielding customized, accurate emails inviting users to provide passwords (actually phishing) or install updates (actually malware).

Moreover, Google is under a heightened obligation to abide by its privacy commitments. For one, privacy policies have the force of contract, so any company breaching its privacy policy risks litigation by harmed consumers. Meanwhile, Google’s prior privacy blunders have put Google under higher scrutiny: Google’s Buzz consent order includes twenty years of FTC oversight of Google’s privacy practices. After Google violated the FTC consent order by installing special code to monitor Apple Safari users whose browser settings specifically indicated they did not want to be tracked, the FTC imposed a $22.5 million fine, its largest ever. Now Google has again violated its privacy policies. A further investigation is surely in order.

A full investigation of Google’s activities in this area will confirm who knew what when. Though Nolan’s complaint was the first to gain widespread notice, at least three other developers had previously raised the same concern (1, 2, 3), dating back to June 2012 or earlier. An FTC investigation will confirm whether Google staff noted these concerns and, if they noticed, why they failed to take action. Perhaps some Google staff proposed updating or clarifying applicable privacy policies; an investigation would determine why such improvements were not made. An investigation would also confirm the allegation (presented in an update to News.com.au reporting on this subject) that prior to October 2012, Google intentionally protected users’ email addresses by providing aliases — letting app developers contact users without receiving users’ email addresses. Given the clear reduction in privacy resulting from eliminating aliases, an investigation would check whether and why Google made this change.

Even before that investigation, Google can take steps to begin to make this right. First, Google should immediately remove users’ details from developers’ screens. Google should simultaneously contact developers who had impermissible access and ask them to destroy any copies that they made. If Google has a contractual basis to require developers to destroy these copies, it should invoke those rights. In parallel, Google should contact victim users to let them know that Google shared their information in a way that was not permitted under Google’s own policies. Google should offer affected users Google’s unqualified apologies as well as appropriate monetary compensation. Since Google revealed users’ information only after users purchased paid apps, users’ payments offer a natural remedy: Google should provide affected users with a full refund for any apps where Google’s privacy practices did not live up to Google’s commitments.

On Facebook and Privacy

I opined on Facebook’s shifting privacy promises and string of privacy breaches.

Facebook Leaks Usernames, User IDs, and Personal Details to Advertisers updated May 26, 2010

Browse Facebook, and you wouldn’t expect Facebook’s advertisers to learn who you are. After all, Facebook’s privacy policy and blog posts promise not to share user data with advertisers except when users grant specific permission. For example, on April 6, 2010 Facebook’s Barry Schnitt promised: “We don’t share your information with advertisers unless you tell us to (e.g. to get a sample, hear more, or enter a contest). Any assertion to the contrary is false. Period.”

My findings are exactly the contrary: Merely clicking an advertiser’s ad reveals to the advertiser the user’s Facebook username or user ID. With default privacy settings, the advertiser can then see almost all of a user’s activity on Facebook, including name, photos, friends, and more.

In this article, I show examples of Facebook’s data leaks. I compare these leaks to Facebook’s privacy promises, and I point out that Facebook has been on notice of this problem for at least eight months. I conclude with specific suggestions for Facebook to fix this problem and prevent its reoccurrence.

Facebook’s data leak is straightforward: Consider a user who clicks a Facebook advertisement while viewing her own Facebook profile, or while viewing a page linked from her profile (e.g. a friend’s profile or a photo). Upon such a click, Facebook provides the advertiser with the user’s Facebook username or user ID.

Facebook leaks usernames and user IDs to advertisers because Facebook embeds usernames and user IDs in URLs which are passed to advertisers through the HTTP Referer header. For example, my Facebook profile URL is http://www.facebook.com/bedelman. Notice my username (yellow).

Of course, it would be incorrect to assume that a person looking at a given profile is in fact the owner of that profile. A request for a given profile might reflect that user looking at her own profile, but it might instead be some other user looking at the user’s profile. However, when a user views her own profile page, Facebook automatically embeds a “profile” tag (green) in the URL:

http://www.facebook.com/bedelman?ref=profile

Furthermore, when a user clicks from her profile page to another page, the resulting URL still bears the user’s own user ID or username, along with the details of the later-requested page. For example, when I view a friend’s profile, the resulting URL is as shown below. Notice the continued reference to my username (yellow) and the fact that this is indeed my profile (green), along with an appendage naming the user whose page I am now viewing (blue).

http://www.facebook.com/bedelman?ref=profile#!/pacoles

Each of these URLs is passed to advertisers whenever a user clicks an ad on Facebook. For example, when I clicked a Livingsocial ad on my own profile page, Facebook redirected me to the advertiser, yielding the following traffic to the advertiser’s server. Notice the transmission in the Referer header (red) of my username (yellow) and the fact that I was viewing my own profile page (green).

GET /deals/socialads_reflector?do_not_redirect=1&preferred_city=152&ref=AUTO_LOWE_Deals_ 1273608790_uniq_bt1_b100_oci123_gM_a21-99 HTTP/1.1

Accept: */*

Referer: http://www.facebook.com/bedelman?ref=profile

…

Host: livingsocial.com

…

The same transmission occurs when a user clicks from her profile page to a friend’s page. For example, I clicked through to a friend’s profile, http://www.facebook.com/bedelman?ref=profile#!/pacoles, where I clicked another Livingsocial ad. Again, Facebook’s redirect caused my browser to transmit in its Referer header (red) my username (yellow), the fact that that username reflects my personal profile (green). Interestingly, my friend’s username was omitted from the transmission because it occurred after a pound sign, causing it to be automatically removed from Referer transmission.

GET /deals/socialads_reflector?do_not_redirect=1&preferred_city=152&ref=AUTO_LOWE_Deals_ 1273608790_uniq_bt1_b100_oci123_gM_a21-99 HTTP/1.1

Accept: */*

Referer: http://www.facebook.com/bedelman?ref=profile

…

Host: livingsocial.com

…

In further testing, I confirmed that the same transmission occurs when a user clicks from her profile page to a photo page, or to any of various other pages linked form a user’s profile.

With a Facebook member’s username or user ID, current Facebook defaults allow an advertiser (and anyone else) to obtain a user’s name, gender, other profile data, picture, friends, networks, wall posts, photos, and likes. Furthermore, the advertiser already knows the user’s basic demographics, since the advertiser knows the user fits the profile the advertiser had requested from Facebook. For example, in grey highlighting above, the advertiser learned from Facebook my age, gender, and geographic location.

Facebook’s Contrary Statements about User Privacy vis-a-vis Advertisers

Facebook has made specific promises as to what information it will share with advertisers. For one, Facebook’s privacy policy promises “we do not share your information with advertisers without your consent” (section 5). Then, in section 7, Facebook lists eleven specific circumstances in which it may share information with others — but none of these circumstances applies to the transmission detailed above.

Facebook’s recent blog postings also deny that Facebook shares users’ identities with advertisers. In an April 6, 2010 post, Facebook promised: “We don’t share your information with advertisers unless you tell us to (e.g. to get a sample, hear more, or enter a contest). Any assertion to the contrary is false. Period.” Facebook’s prior postings were similar. July 1, 2009: “Facebook does not share personal information with advertisers except under the direction and control of a user. … You can feel confident that Facebook will not share your personal information with advertisers unless and until you want to share that information.” December 9, 2009: “Facebook never shares personal information with advertisers except under your direction and control.” As to all these claims, I disagree. Sharing a username or user ID upon a single click, without any disclosure or indication that such information will be shared, is not at a user’s direction and control.

Facebook Has Been on Notice of This Problem for Eight Months

AT&T Labs researcher Balachander Krishnamurthy and Worcester Polytechnic Instituteprofessor Craig Wills previously identified the general problem of social networks leaking user information to advertisers, including leakage through the Referer headers detailed above. In August 2009, their On the Leakage of Personally Identifiable Information Via Online Social Networks was posted to the web and presented at the Workshop on Online Social Networks (WOSN).

Through Krishnamurthy and Wills’ research, Facebook eight months ago received actual notice of the data leakage at issue. A September 2009 MediaPost article confirms Facebook’s knowledge through it spokesperson’s response. However, Facebook spokesperson Simon Axten severely understated the severity of the data leak: Axten commented “The average Facebook user views a number of different profile pages over the course of a session …. It’s thus difficult for a tracking website to know whether the identifier belongs to the person being tracked, or whether it instead belongs to a friend or someone else whose profile that person is viewing.” I emphatically disagree. As shown above, when a user views her own profile, or a page linked from her own profile, the “?ref=profile” tag is added to the URL — exactly confirming the identity of the profile owner.

Since receiving actual notice of these data leaks, Facebook has implemented scores of new features for advertising, monetization, information-sharing, and reorganization. Inexplicably, Facebook has failed to address leakage of user information to advertisers. That’s ill-advised and short-sighted: Users don’t expect ad clicks to reveal their names and details, and Facebook’s privacy policy and blog posts promise to honor that expectation. So Facebook needs to adjust its actual practices to meet its promises.

Preventing advertisers from receiving usernames and user IDs is strikingly straightforward: A modified redirect can mask referring URLs. Currently, Facebook uses a simple HTTP 301 redirect, which preserves referring URLs — exactly creating the problem detailed above. But a FORM POST redirect, META REFRESH redirect, or JavaScript redirect could conceal referring URLs — preventing advertisers from receiving username or user ID information.

Instead, Facebook has partially implemented the pound sign method described above — putting some, but not all, sensitive information after a pound sign, with the result that sometimes this information is not transmitted as a Referer. If fully implemented across the Facebook site, this approach might prevent the data leakage I uncovered. However, in my testing, numerous within-Facebook links bypass the pound sign masking. In any event, an improved redirect would be much simpler to implement — requiring only a single adjustment to the ad click-redirect script, rather than requiring changes to URL formats across the Facebook site.

Finally, Facebook should inform users of what has occurred. Facebook should apologize to users, explain why it didn’t live up to its explicit privacy commitments, and establish procedures — at least robust testing, if not full external review — to assure that users’ privacy is correctly protected in the future.

On May 20, 2010, the Wall Street Journal reported the problem detailed above. On or about that same day, Facebook removed the ref=profile tags that were the crux of the data leak.

I yesterday spoke with Arturo Bejar, a Facebook engineer who investigated this problem. Arturo told me that after Krishnamurthy and Wills’ article, he reviewed relevant Facebook systems in search of leakage of user information. At that time, he found none, in that Facebook revealed the URLs users were browsing when they clicked ads, but did not indicate whether the user clicking a given ad was in fact the owner of the profile showing that ad. However, in a subsequent Facebook redesign, beginning in February 2010, Facebook user home pages received a new “profile” button which carried the ref=profile URL tags I analyze above. Because this tag was added without a further privacy review, Arturo tells me that he and others at Facebook did not consider the interaction between this tag and the problem I describe above. Arturo says that’s why this problem occurred despite the prior Krishnamurthy and Wills article.

Arturo also pointed out that the problem I describe did not affect advertisers whose landing pages were pages on Facebook (rather than advertisers’ own external sites).

Meanwhile, Facebook’s May 24 “Protecting Privacy with Referrers” presents Facebook’s view of the problem in greater detail. Facebook’s posting offers a fine analysis of the various methods of redirects and Facebook’s choice among them. It’s worth a read.

After discussing the problem with Arturo and reading Facebook’s new post, I reached a more favorable impression of Facebook’s response. But my view is tempered by Facebook’s ill-advised attempts to downplay the breach.

- Rather than affirmatively describing the specific design flaw, Facebook’s post describes what “could” “potentially” occur. Facebook’s post never gives a clear affirmative statement of the problem.

- Facebook says advertisers would need to “infer” a user’s username/ID. But usernames and IDs are sent directly, in clear and unambiguous URLs, hardly requiring complex analysis

- Facebook claims that the breach affected only “one case … if a user takes a specific route on the site” (WSJ quote). Facebook also calls the problem “a rarely occurring case” (posting). I dispute these characterizations. It is hardly “rare” for a user to view her own profile. To view her own profile and click an ad? There’s no reason to think that’s any less frequent than clicking an ad elsewhere. To view her own profile, click through to another page, and then click an ad? That’s perfectly standard. Furthermore, although Facebook told the Journal there is “one case” in which data is leaked improperly, in fact I’ve found many such cases including clicking from profile to ad, from profile to friend’s page to ad, and from profile to photo page to ad, to name three.

- Through transmission in HTTP Referer headers, usernames and IDs appears reach advertisers’ web servers in a manner such that default server log files would store this data indefinitely, and default analytics would tabulate it accordingly. Facebook says it has “no reason to believe that any advertisers were exploiting” the data breach I reported, but the fact is, this data ends up in a place where advertisers could (and, as to historic data, still can) access it easily, using standard tools, and at their convenience.

- Although Facebook’s post says the problem is “potential,” I found that a user’s username/ID is sent with each and every click in the affected circumstances.

So the problem was substantial, real, and immediate. Facebook errs in suggesting the contrary.

Google Toolbar Tracks Browsing Even After Users Choose "Disable"

Disclosure: I serve as co-counsel in unrelated litigation against Google, Vulcan Golf et al. v. Google et al. I also serve as a consultant to various companies that compete with Google. But I write on my own — not at the suggestion or request of any client, without approval or payment from any client.

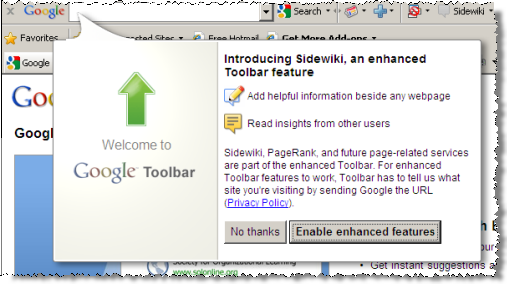

Run the Google Toolbar, and it’s strikingly easy to activate “Enhanced Features” — transmitting to Google the full URL of every page-view, including searches at competing search engines. Some critics find this a significant privacy intrusion (1, 2, 3). But in my testing, even Google’s bundled toolbar installations provides some modicum of notice before installing. And users who want to disable such transmissions can always turn them off – or so I thought until I recently retested.

In this article, I provide evidence calling into question the ability of users to disable Google Toolbar transmissions. I begin by reviewing the contents of Google’s "Enhanced Features" transmissions. I then offer screenshot and video proof showing that even when users specifically instruct that the Google Toolbar be “disable[d]”, and even when the Google Toolbar seems to be disabled (e.g., because it disappears from view), Google Toolbar continues tracking users’ browsing. I then revisit how Google Toolbar’s Enhanced Features get turned on in the first place – noting the striking ease of activating Enhanced Features, and the remarkable absence of a button or option to disable Enhanced Features once they are turned on. I criticize the fact that Google’s disclosures have worsened over time, and I conclude by identifying changes necessary to fulfill users’ expectations and protect users’ privacy.

"Enhanced Features" Transmissions Track Page-Views and Search Terms

Certain Google Toolbar features require transmitting to Google servers the full URLs users browse. For example, to tell users the PageRank of the pages they browse, the Google Toolbar must send Google servers the URL of each such page. Google Toolbar’s “Related Sites” and “Sidewiki” (user comments) features also require similar transmissions.

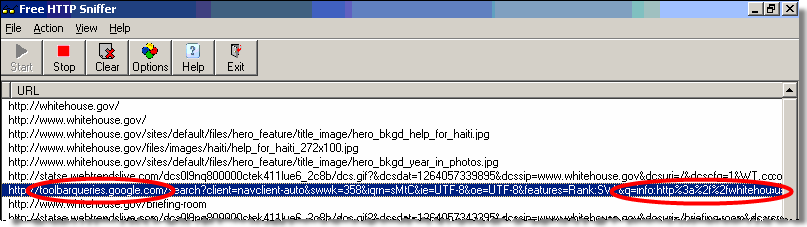

With a network monitor, I confirmed that these transmissions include the full URLs users visit – including domain names, directories, filenames, URL parameters, and search terms. For example, I observed the transmission below when I searched Yahoo (green highlighting) for "laptops" (yellow).

GET /search?client=navclient-auto&swwk=358&iqrn=zuk&orig=0gs08&ie=UTF-8&oe=UTF-8&querytime=kV&querytimec=kV &features=Rank:SW:&q=info:http%3a%2f%2frds.yahoo.com%2f_ylt%3dA0oGkl32p1tLT2EB8ohXNyoA%2fSIG%3d18045klhr%2f

EXP%3d1264384374%2f**http%253a%2f%2fsearch.yahoo.com%2fsearch%253fp%3dlaptops%2526fr%3dsfp%2526xargs%3d12KP

jg1itSroGmmvmnEOOIMLrcmUsOkZ7Fo5h7DOV5CtdY6hNdE%25252DIfXpP0xZg6WO8T7xvSy7HBreVFdJGu277WVk0qfeG%25255FGOW%2

5255F772GnNVme5ujWkF3s%25252DJ%25255F0%25252Dmdn4RvDE8%25252E%2526pstart%3d7%2526b%3d11&googleip=O;72.14.20

4.104;226&ch=711984234986 HTTP/1.1

User-Agent: Mozilla/4.0 (compatible; GoogleToolbar 6.4.1208.1530; Windows XP 5.1; MSIE 8.0.6001.18702)

Accept-Language: en

Host: toolbarqueries.google.com

Connection: Keep-Alive

Cache-Control: no-cache

Cookie: PREF=ID=…

HTTP/1.1 200 OK …

Screenshots – Google Toolbar Continues Tracking Browsing Even When Users "Disable" the Toolbar via Its "X" Button

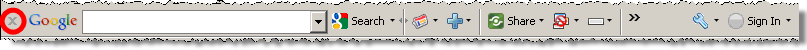

Consistent with modern browser plug-in standards, the current Google Toolbar features an “X” button to disable the toolbar:

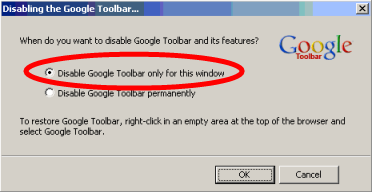

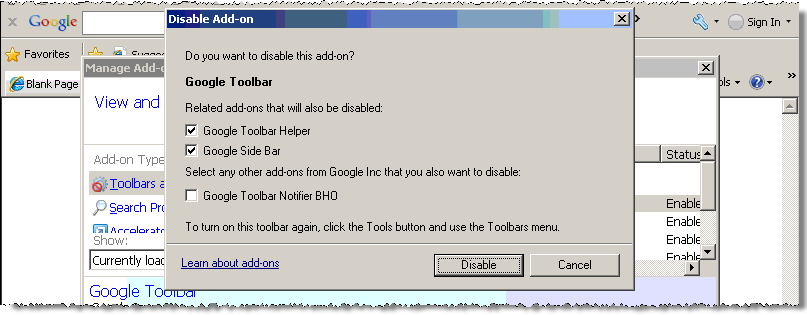

I clicked the “X” and received a confirmation window:

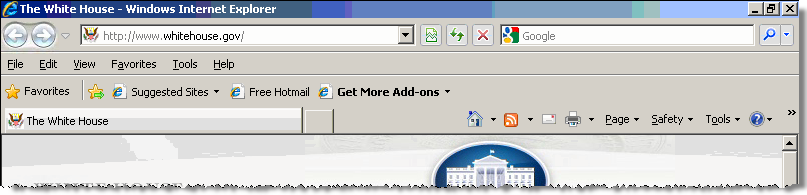

I chose the top option and pressed OK. The Google Toolbar disappeared from view, indicating that it was disabled for this window, just as I had requested. Within the same window, I requested the Whitehouse.gov site:

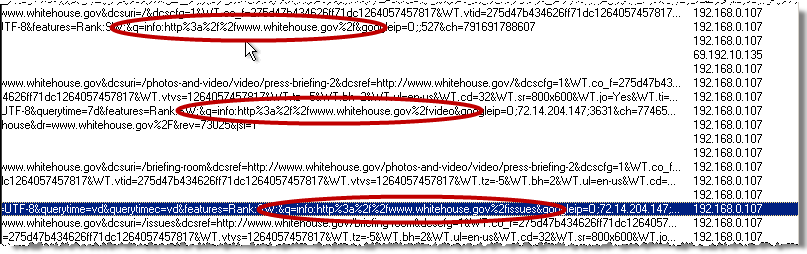

Although I had asked that the Google Toolbar be "disable[d] … for this window " and although the Google Toolbar disappeared from view, my network monitor revealed that Google Toolbar continued to transmit my browsing to its toolbarqueries.google.com server:

See also a screen-capture video memorializing these transmissions.

These Nonconsensual Transmissions Affect Important, Routine Scenarios (added 1/26/10, 12:15pm)

In a statement to Search Engine Land, Google argued that the problems I reported are "only an issue until a user restarts the browser." I emphatically disagree.

Consider the nonconsensual transmission detailed in the preceding section: A user presses "x", is asked "When do you want to disable Google Toolbar and its features?", and chooses the default option, to "Disable Google Toolbar only for this window." The entire purpose of this option is to take effect immediately. Indeed, it would be nonsense for this option to take effect only upon a browser restart: Once the user restarts the browser, the "for this window" disabling is supposed to end, and transmissions are supposed to resume. So Google Toolbar transmits web browsing before the restart, and after the restart too. I stand by my conclusion: The "Disable Google Toolbar only for this window" option doesn’t work at all: It does not actually disable Google Toolbar for the specified window, not immediately and not upon a restart.

Crucially, these nonconsensual transmissions target users who are specifically concerned about privacy. A user who requests that Google Toolbar be disabled for the current window is exactly seeking to do something sensitive, confidential, or embarrassing, or in any event something he does not wish to record in Google’s logs. This privacy-conscious user deserves extra privacy protection. Yet Google nonetheless records his browsing. Google fails this user — specifically and unambiguously promising to immediately stop tracking when the user so instructs, but in fact continuing tracking unabated.

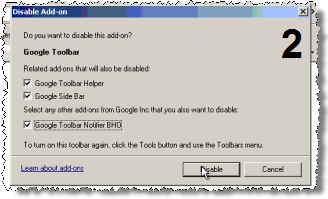

Google Toolbar Continues Tracking Browsing Even When Users "Disable" the Toolbar via "Manage Add-Ons"

Internet Explorer 8 includes a Manage Add-Ons screen to disable unwanted add-ons. On a PC with Google Toolbar, I activated Manage Add-Ons, clicked the Google Toolbar entry, and chose Disable. I accepted all defaults and closed the dialog box.

Again I requested the whitehouse.gov site. Again my network monitor revealed that Google Toolbar continued to transmit my browsing to its toolbarqueries.google.com server. Indeed, as I requested various pages on the whitehouse.gov site, Google Toolbar transmitted the full URLs of those pages, as shown in the second and third circles below.

See also a screen-capture video memorializing these transmissions.

In a further test, performed January 23, I reconfirmed the observations detailed here. In that test, I demonstrated that even checking the "Google Toolbar Notifier BHO" box and disabling that component does not impede these Google Toolbar transmissions. I also specififically confirmed that these continuing Google Toolbar transmissions track users’ searches at competing search engines. See screen-capture video.

In my tests, in this Manage Add-Ons scenario, Google Toolbar transmissions cease upon the next browser restart. But no on-screen message alerts the user to the need for a browser-restart for changes to take effect, so the user has no reason to think a restart is required.

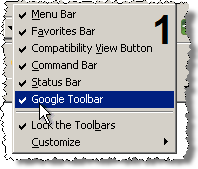

Google Toolbar Continues Tracking Browsing When Users "Disable" the Toolbar via Right Click (added 1/26/10 11:00pm)

Danny Sullivan asked me whether Google Toolbar continues Enhanced Features transmissions if users hide Google Toolbar via IE’s right-click menu. In a further test, I confirmed that Google Toolbar transmissions continue in these circumstances. Below are the four key screenshots: 1) I right-click in empty toolbar space, and I uncheck Google Toolbar. 2) I check the final checkbox and choose Disable. 3) Google Toolbar disappears from view and appears to be disabled. I browse the web. 4) I confirm that Google Toolbar’s transmissions nonetheless continue. See also a screen-capture video.

Users May Easily or Accidentally Activate “Enhanced Features” Transmissions

Google Toolbar invites users to activate Enhanced Features with a single click, the default. Also, notice self-contradictory statements (transmitting ‘site’ names versus full ‘URL’ adresses).

Google Toolbar invites users to activate Enhanced Features with a single click, the default. Also, notice self-contradictory statements (transmitting ‘site’ names versus full ‘URL’ adresses).

The above-described transmissions occur only if a user runs Google Toolbar in its “Enhanced Features” mode. But it is strikingly easy for a user to stumble into this mode.

For one, the standard Google Toolbar installation encourages users to activate Enhanced Features via a “bubble” message shown at the conclusion of installation. See the screenshot at right. This bubble presents a forceful request for users to activate Sidewiki: The feature is described as “enhanced” and “helpful”, and Google chooses to tout it with a prominence that indicates Google views the feature as important. Moreover, the accept button features bold type plus a jumbo size (more than twice as large as the button to decline). And the accept button has the focus – so merely pressing Space or Enter (easy to do accidentally) serves to activate Enhanced Features without any further confirmation.

I credit that the bubble mentions the important privacy consequence of enabling Enhanced Features: “For enhanced features to work, Toolbar has to tell us what site you’re visiting by sending Google the URL.” But this disclosure falls importantly short. For one, Enhanced Features transmits not just “sites” but specific full URLs, including directories, filenames, URL parameters, and search keywords. Indeed, Google’s bubble statement is internally-inconsistent – indicating transmissions of “sites” and “URLs” as if those are the same thing, when in fact the latter is far more intrusive than the former, and the latter is accurate.

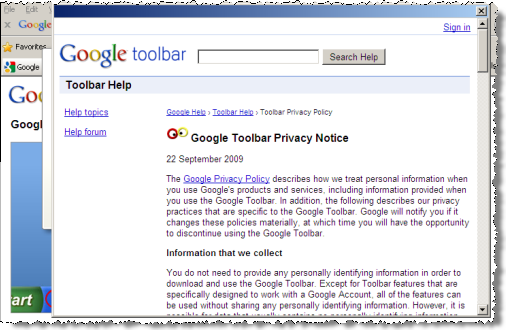

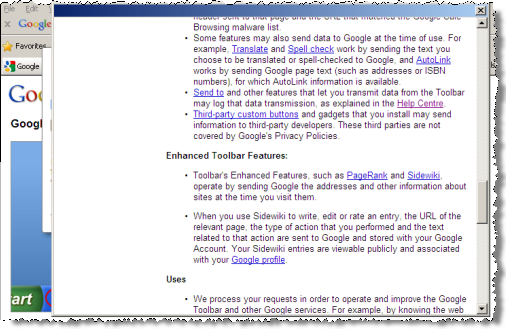

The bubble also falls short in its presentation of Google Toolbar’s Privacy Policy. If a user clicks the Privacy Policy hyperlink, the user receives the image shown in the left image below. Notice that the Privacy Policy loads in an unusual window with no browser chrome – no Edit-Find option to let a user search for words of particular interest, no Edit-Select All and Edit-Copy option to let a user copy text to another program for further review, no Save or Print options to let a user preserve the file. Had Google used a standard browser window, all these features would have been available, but by designing this nonstandard window, Google creates all these limitations. The substance of the document is also inapt. For one, “Enhanced Toolbar Features” receive no mention whatsoever until the fifth on-screen page (right image below). Even there, the first bullet describes transmission of “the addresses [of] the sites” users visit – again falsely indicating transmission of mere domain names, not full URLs. The second bullet mentions transmission of “URL[s]” but says such transmission occurs “[w]hen you use Sidewiki to write, edit, or rate an entry.” Taken together, these two bullets falsely indicate that URLs are transmitted only when users interact with Sidewiki, and that only sites are transmitted otherwise, when in fact URLs are transmitted whenever Enhanced Features are turned on.

Certain bundled installations make it even easier for users to get Google Toolbar unrequested, and to end up with Enhanced Features too. With dozens of Google Toolbar partners, using varying installation tactics, a full review of their practices is beyond the scope of this article. But they provide significant additional cause for concern.

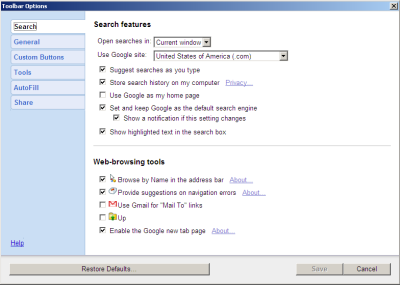

Enhanced Features: Easy to Enable, Hard to Turn Off

The preceding sections shows that users can enable Google Toolbar’s Enhanced Features with a single click on a prominent, oversized button. But disabling Enhanced Features is much harder.

The preceding sections shows that users can enable Google Toolbar’s Enhanced Features with a single click on a prominent, oversized button. But disabling Enhanced Features is much harder.

Consider a user who changes her mind about Enhanced Features but wishes to keep Google Toolbar in its basic mode. How exactly can she do so? Browsing the Google Toolbar’s entire Options screen, I found no option to disable Enhanced Features. Indeed, Enhanced Features are easily enabled with a single click, during installation (as shown above) or thereafter. But disabling Enhanced Features seems to require uninstalling Google Toolbar altogether, and in any event disabling Enhanced Features certainly lacks any comparably-quick command.

I’m reminded of The Eagles’ Hotel California: "you can check out anytime you like, but you can never leave." And of course, as discussed above, a user who chooses the X button or Manage Add-Ons, will naturally believe the Google Toolbar is disabled, when in fact it continues transmissions unabated.

Google Toolbar Disclosures Have Worsened Over Time

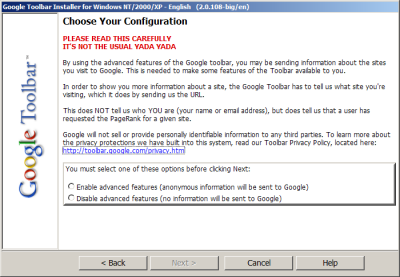

I first wrote about Google Toolbar’s installation and privacy disclosures in my March 2004 FTC comments on spyware and adware. In that document, I praised Google’s then-current toolbar installation sequence, which featured the impressive screen shown at right.

I praised this screen with the following discussion:

I consider this disclosure particularly laudable because it features the following characteristics: It discusses privacy concerns on a screen dedicated to this topic, separate from unrelated information and separate from information that may be of lesser concern to users. It uses color and layout to signal the importance of the information presented. It uses plain language, simple sentences, and brief paragraphs. It offers the user an opportunity to opt out of the transmission of sensitive information, without losing any more functionality than necessary (given design constraints), and without suffering penalties of any kind (e.g. forfeiture of use of some unrelated software). As a result of these characteristics, users viewing this screen have the opportunity to make a meaningful, informed choice as to whether or not to enable the Enhanced Features of the Google Toolbar.

I stand by that praise. But six years later, Google Toolbar’s installation sequence is inferior in every way:

- Now, initial Enhanced Features privacy disclosures appear not in their own screen, but in a bubble pitching another feature (Sidewiki). Previously, format (all-caps, top-of-page), color (red) and language ("… not the usual yada yada") alerted users to the seriousness of the decision at hand.

- Now, Google presents Enhanced Features as a default with an oversized button, bold type, and acceptance via a single keystroke. Previously, neither option was a default, and both options were presented with equal prominence.

- Now, privacy statements are imprecise and internally-inconsistent, muddling the concepts of site and URL. Previous disclosures were clear in explaining that acceptance entails "sending us [Google] the URL" of each page a user visits.

- The current feature name, "Enhanced Features," is less forthright than the prior "Advanced Features" label. The name "Advanced Features" appropriately indicated that the feature is not appropriate for all users (but is intended for, e.g., "advanced" users). In contrast, the current "Enhanced Features" name suggests that the feature is an "enhancement" suitable for everyone.

Google’s Undisclosed Taskbar Button

The Google Toolbar also added a “Google” button to my Taskbar, immediately adjacent to the Start button. The Toolbar installer added this button without any disclosure whatsoever in the installation sequence – not on the toolbar.google.com web page, not in the installer EXE, not anywhere else.

An in-Taskbar button is not consistent with ordinary functions users reasonably expect when they seek and install a “toolbar.” Because this function arrives on a user’s computer without notice and without consent, it is an improper intrusion.

Google’s first step is simple: Fix the Toolbar so that X and Manage Add-Ons in fact do what they promise. When a user disables Google Toolbar, all Enhanced Features transmissions need to stop, immediately and without exception. This change must be deployed to all Google Toolbar users straightaway.

Google also needs to clean up the results of its nonconsensual data collection. In particular, Google has collected browsing data from users who specifically declined to allow such data to be collected. In some instances this data may be private, sensitive, or embarrassing: Savvy users would naturally choose to disable Google Toolbar before their most sensitive searches. Google ordinarily doesn’t let users delete their records as stored on Google servers. But these records never should have been sent to Google in the first place. So Google should find a way to let concerned users request that Google fully and irreversibly delete their entire Toolbar histories.

Even when Google fixes these nonconsensual transmissions, larger problems remain. The current Toolbar installation sequence suffers inconsistent statements of privacy consequences, with poor presentation of the full Toolbar Privacy Statement. Toolbar adds a button to users’ Taskbar unrequested. And as my videos show, once Google puts its code on a user’s computer, there’s nothing to stop Google from tracking users even after users specifically decline. I’ve run Google Toolbar for nearly a decade, but this week I uninstalled Google Toolbar from all my PCs. I encourage others to do the same.

Upromise Savings — At What Cost? updated January 25, 2010

Upromise touts opportunities for college savings. When members shop at participating online merchants, dine at participating restaurants, or purchase selected products at retail stores, Upromise collects commissions which fund college savings accounts.

Unfortunately, the Upromise Toolbar also tracks users’ behavior in excruciating detail. In my testing, when a user checked an innocuously-labeled box promising "Personalized Offers," the Upromise Toolbar tracked and transmitted my every page-view, every search, and every click, along with many entries into web forms. Remarkably, these transmissions included full credit card numbers — grabbed out of merchants’ HTTPS (SSL) secure communications, yet transmitted by Upromise in plain text, readable by anyone using a network monitor or other recording system.

Proof of the Specific Transmissions

I began by running a search at Google. The Upromise toolbar transmissions reported the full URL I requested, including my search provider (yellow) and my full search keywords (green).

POST /fast-cgi/ClickServer HTTP/1.0

User-Agent: upromise/3195/3195/UP23806818/0012

Host: dcs.consumerinput.com

Content-Length: 274

Connection: Keep-Alive

md5=ee593c14f70c1b7f8b3341a91c3e3639&ts=1264045792.140&bua=N%2FA&meth=get&eid=300&fid=NULL&bin=24af1f0

&refererHeader=http%3A%2F%2Fwww%2Egoogle%2Ecom%2F&url=http%3A%2F%2Fwww%2Egoogle%2Ecom%2Fsearch%3Fhl%3D

en%26source%3Dhp%26q%3Di%2Bfeel%2Bsick%26aq%3Df%26aql%26aqi%3Dg10%26oq

HTTP/1.1 200 OK

Date: Thu, 21 Jan 2010 03:49:51 GMT

Server: Apache/2.2.3 (Debian) mod_python/3.3.1 Python/2.5.1 mod_ssl/2.2.3 OpenSSL/0.9.8c

Connection: close

Content-Type: text/html; charset=UTF-8

<capture>

<md5>ee593c14f70c1b7f8b3341a91c3e3639</md5>

<version>1.0</version>

</capture>

I clicked a result — a page on Wikipedia. Transmissions included the full URL of my request (blue) as well as the web search provider (yellow) and keywords (green) that had referred me (red) to this site.

POST /fast-cgi/ClickServer HTTP/1.0

User-Agent: upromise/3195/3195/UP23806818/0012

Host: dcs.consumerinput.com

Content-Length: 304

Connection: Keep-Alive

md5=ee7e3174db149d0d97f51b10db9ac58d&ts=1264045931.921&bua=N%2FA&meth=get&eid=300&fid=NULL&bin=24af1f0

&refererHeader=http%3A%2F%2Fwww%2Egoogle%2Ecom%2Fsearch%3Fhl%3Den%26source%3Dhp%26q%3Di%2Bfeel%2Bsick%

26aq%3Df%26aql%3D%26aqi%3Dg10%26oq%3D&url=http%3A%2F%2Fen%2Ewikipedia%2Eorg%2Fwiki%2FI%5FFeel%5FSick

HTTP/1.1 200 OK

Date: Thu, 21 Jan 2010 03:52:11 GMT

Server: Apache/2.2.3 (Debian) mod_python/3.3.1 Python/2.5.1 mod_ssl/2.2.3 OpenSSL/0.9.8c

Connection: close

Content-Type: text/html; charset=UTF-8

<capture>

<md5>ee7e3174db149d0d97f51b10db9ac58d</md5>

<version>1.0</version>

</capture>

I browsed onwards to Buy.com (grey), where I added an item to my shopping cart and proceeded to checkout. When prompted, I entered a (made-up) credit card number. Buy.com appropriately secured the card number with HTTPS encryption. But, remarkably, Upromise extracted and transmitted the full sixteen-digit card number (yellow) — as well as my (also fictitious) CVV code (green), and expiration date (blue).

POST /fast-cgi/ClickServer HTTP/1.0

User-Agent: upromise/3195/3195/UP23806818/0012

Host: dcs.consumerinput.com

Content-Length: 1936

Connection: Keep-Alive

md5=352732ac9ee0d9e970f3c65d62ed03b1&ts=1264046115.702&bua=N%2FA&meth=post&eid=300&fid=NULL&bin=24af1f0

&refererHeader=https%3A%2F%2Fssl%2Ebuy%2Ecom%2FCO%2FCheckout%2FpaymentOptions%2Easpx&url=https%3A%2F%2F

ssl%2Ebuy%2Ecom%2FCO%2FCheckout%2FpaymentOptions%2Easpx%3F%5F%5FEVENTTARGET%26%5F%5FEVENTARGUMENT%26%5F

%5FVIEWSTATE%3D%2FwEPDwUKMTQ1MjY3MzM2Mw9kFgQCAw9kFgQCAQ8PFgIeCEltYWdlVXJsBV1odHRwczovL2EyNDguZS5ha2FtYW

kubmV0L2YvMjQ4Lzg0NS8xMGgvaW1hZ2VzLmJ1eS5jb20vYnV5X2Fzc2V0cy92Ni9oZWFkZXIvMjAwNi9idXlfbG9nby5naWZkZAICD

w8WAh8ABXdodHRwczovL2EyNDguZS5ha2FtYWkubmV0L2YvMjQ4Lzg0NS8xMGgvaW1hZ2VzLmJ1eS5jb20vYnV5X2Fzc2V0cy92Ni9j

b3JwL2NoZWNrb3V0X3Byb2Nlc3MvY2hlY2tvdXRfM19wYXltZW50X2dyZWVuLmdpZmRkAgUPZBYQAgUPZBYCZg9kFgRmDw8WBB8ABVN

odHRwczovL2EyNDguZS5ha2FtYWkubmV0L2YvMjQ4Lzg0NS8xMGgvaW1hZ2VzLmJ1eS5jb20vYnV5X2Fzc2V0cy9jcy9pbWFnZXMvYm

1sLmdpZh4HVmlzaWJsZWdkZAIBDw8WAh8ABWNodHRwczovL2EyNDguZS5ha2FtYWkubmV0L2YvMjQ4Lzg0NS8xMGgvaW1hZ2VzLmJ1e

S5jb20vYnV5X2Fzc2V0cy9idXR0b25zLzIwMDgvYnV0dG9uX2NvbnRpbnVlMi5naWZkZAIHD2QWAmYPZBYKAgUPEA9kFgIeB29uY2xp

Y2sFKFNldFVuaXF1ZVJhZGlvQnV0dG9uKCdyZG9QYXltZW50cycsdGhpcylkZGQCBw8QD2QWAh4Ib25DaGFuZ2UFG2phdmFzY3JpcHQ

6c2hvd0hpZGVDQyh0aGlzKWRkZAIJDw9kFgIfAgUfc3dpdGNoUmFkaW8oJ3Jkb05ld0NyZWRpdENhcmQnKWQCDQ8QZA8WDWYCAQICAg

MCBAIFAgYCBwIIAgkCCgILAgwWDRAFBDIwMTAFBDIwMTBnEAUEMjAxMQUEMjAxMWcQBQQyMDEyBQQyMDEyZxAFBD%2A%2A%2A%2A%7B

1422%7D%26%5F%5FEVENTVALIDATION%3D%2FwEWKQLNvonpCgKYm5r9BALB5eOPBALy2ZqvCQLv2ZqvCQLq2ZqvCQLu2ZqvCQLr2ba

vCQL28Nu2CwLDqZ7xDALDqZrxDALDqabxDALDqaLxDALDqa7xDALDqarxDALDqbbxDALDqfLyDALDqf7yDALcqZLxDALcqZ7xDALcqZ

rxDALrtZj9AgLrtaSgCQLrtbAHAuu13OoIAuu16NEPAuu19LQGAuu1gJgNAuu1rP8FAuu1%2BJcHAuu1hPsPAvai%2BtMMAvaihrcDA

vaikpoKAt7CxckKAsqi76gMAva5sdkEAvLqvYoEAoaI4c0FArr3pYIHApibrtgNijCSRDzGArpVaF4m3bbOLp8yvUM%3D%26rdoPaym

ents%3DrdoNewCreditCard%26lstCCType%3D9%26txtNewCCNumber%3D4412124112341234%26lstNewCCMonth%3D01%26lstN

ewCCYear%3D2010%26txtNewCVV2%3D222%26btnContinue2%2Ex%3D18%26btnContinue2%2Ey%3D19

HTTP/1.1 200 OK

Date: Thu, 21 Jan 2010 03:55:15 GMT

Server: Apache/2.2.3 (Debian) mod_python/3.3.1 Python/2.5.1 mod_ssl/2.2.3 OpenSSL/0.9.8c

Connection: close

Content-Type: text/html; charset=UTF-8

<capture>

<md5>352732ac9ee0d9e970f3c65d62ed03b1</md5>

<version>1.0</version>

</capture>

Upromise also transmitted my email address. For example, when I logged into Restaurant.com, Upromise’s transmission included my email (yellow):

POST /fast-cgi/ClickServer HTTP/1.0

User-Agent: upromise/3195/3195/UP23806818/0012

Host: dcs.consumerinput.com

Content-Length: 378

Connection: Keep-Alive

md5=26830cfab132bb3122fcf40d8cd0f2f9&ts=1264049936.327&bua=N%2FA&meth=post&eid=303&fid=NULL&bin=24af1f0

&refererHeader=&url=https%3A%2F%2Fwww%2Erestaurant%2Ecom%2Fregister%2Dlogin%2Easp%3Fpurchasestatus%3DRE

GISTER%2DLOGIN%26hdnButton%3DSignin%26txtMemberSignin%3Dedelman%40pobox%2Ecom%26radio%5Fcust%3Dcustomer

%5Fexisting%26txtMemberPassword…

HTTP/1.1 200 OK

Date: Thu, 21 Jan 2010 04:58:56 GMT

Server: Apache/2.2.3 (Debian) mod_python/3.3.1 Python/2.5.1 mod_ssl/2.2.3 OpenSSL/0.9.8c

Connection: close

Content-Type: text/html; charset=UTF-8

<capture>

<md5>26830cfab132bb3122fcf40d8cd0f2f9</md5>

<version>1.0</version>

</capture>

All the preceding transmission were made over my ordinary Internet connection just as shown above. In particular, these transmissions were sent in plain text — without encryption or encoding of any kind. Any computer with a network monitor (including, for users connected by Wi-Fi, any nearby wireless user) could easily read these communications. With no additional hardware or software, a nearby listener could thereby obtain, e.g., users’ full credit card numbers — even though merchants used HTTPS security to attempt to keep those numbers confidential.

The Destination of Upromise’s Transmissions: Compete, Inc.

As shown in the "host:" header of each of the preceding communications, transmissions flow to the consumerinput.com domain. Whois reports that this domain is registered to Boston, MA traffic-monitoring service Compete, Inc. Compete’s site promises clients access to "detailed behavioral data," and Compete says more than 2 million U.S. Internet users "have given [Compete] permission to analyze the web pages they visit."

Upromise’s Disclosures Misrepresent Data Collection and Fail to Obtain Consumer Consent

Upromise’s installation sequence does not obtain users’ permission for this detailed and intrusive tracking. Quite the contrary: Numerous Upromise screens discuss privacy, and they all fail to mention the detailed information Upromise actually transmits.

The Upromise toolbar installation page touts the toolbar’s purported benefits at length, but mentions no privacy implications whatsoever.

If a user clicks the prominent button to begin the toolbar installation, the next screen presents a 1,354-word license agreement that fills 22 on-screen pages and offers no mechanism to enlarge, maximize, print, save, or search the lengthy text. But even if a user did read the license, the user would receive no notice of detailed tracking. Meanwhile, the lower on-screen box describes a "Personalized Offers" feature, which is labeled as causing "information about [a user’s] online activity [to be] collected and used to provide college savings opportunities" But that screen nowhere admits collecting users’ email addresses or credit card numbers. Nor would a user rightly expect that "information about … online activity" means a full log of every search and every page-view across the entire web.

The install sequence does link to Upromise’s privacy policy. But this page also fails to admit the detailed tracking Upromise performs. Indeed, the privacy policy promises that Personalized Offers data collection will be "anonymous" — when in fact the transmissions include email addresses and credit card numbers. The privacy policy then claims that any collection of personal information is "inadvertent" and that such information is collected only "potentially." But I found that the information transmissions described above were standard and ongoing.

The privacy policy also limits Upromise’s sharing of information with third parties, claiming that such sharing will include only "non-personally identifiable data." But I found that Upromise was sharing highly sensitive personal information, including email addresses and credit card numbers.

In addition, Upromise’s data transmissions contradict representation in Upromise’s FAQ. The top entry in the FAQ promises that Upromise "has implemented security systems designed to keep [users’] information safe." But transmitting credit card numbers in cleartext is reckless and ill-advised — specifically contrary to standard Payment Card Industry (PCI) rules, and the opposite of "safe." Indeed, in an ironic twist, Upromise’s FAQ goes on to discuss at great length the benefits of SSL encryption — benefits of course lacking when Upromise transmits users’ credit card numbers without such encryption.

The Upromise toolbar offers an Options screen which again makes no mention of key data transmissions. The screen admits collecting "information about the web sites you visit." But it says nothing of collecting email addresses, credit card numbers, and more.

The transmissions at issue affect only those users who agree to run Upromise’s Personalized Offers system. But until last week, that option was set as the default upon new Upromise toolbar installations — inviting users to initiate these transmissions without a second look.

Even if Upromise ceased the most outrageous transmissions detailed above, Upromise’s installation disclosures would still give ample basis for concern. To describe tracking, transmitting, and analyzing a user’s every page-view and every search, Upromise’s install screen euphemistically mentions that its "service provider may use non-personally identifiable information about your online activity." This admission appears below a lengthy EULA, under a heading irrelevantly labeled "Personalized Offers" — doing little to draw users’ attention to the serious implications of Personalized Offers. I don’t see why Upromise users would ever want to accept this detailed tracking: It’s entirely unrelated to the college savings that draws users to the Upromise site. But if Upromise is to keep this function, users deserve a clear, precise, and well-labeled statement of exactly what they’re getting into.

Upromise’s multi-faceted business raises other concerns that also deserve a critical look. The implications for affiliate merchants are particularly serious — a risk of paying affiliate commission for users already at a merchant’s site. But I’ll save this subject for another day.

Upromise’s Response (posted: January 23, 2010)

Upromise told PC Magazine’s Larry Seltzer that less than 2% of their 12 million members were affected. Though 2% of 12 million is 240,000 — a lot!

Upromise staff also wrote to me. I replied with a few questions:

1) How long was the problem in effect?

2) How many users were affected?

3) What caused the problem?

4) I notice that the Upromise toolbar EULA has numerous firmly-worded defensive provisions. (See the entire sections captioned “Disclaimer of Warranty” and “Limitation of Liability” – purporting to disclaim responsibility for a wide variety of problems. And then see Governing Law and Arbitration for a purported limitation on what law governs and how claims may be brought.) For consumers who believe they suffered losses or harms as a result of this problem, will Upromise invoke the defensive provisions in its EULA to attempt to avoid or limit liability?

I’m particularly interested in the answer to the final question. The EULA is awfully one-sided. I appreciate Upromise confirming the serious failure I uncovered. Upromise should also accept whatever legal liability goes with this breach.

Upromise’s Response to My Questions (posted: January 25, 2010)

Upromise replied to my four questions to indicate that the scope of the problem was "approximately 1% of our 12 million members." Upromise did not answer my questions about the duration of the problem, the cause of the problem, or the effect of Upromise’s EULA defenses.

Sears Exposes Customer Purchase History in Violation of Its Privacy Policy

Want to know what a given customer has purchased from Sears? It’s surprisingly easy to find out. Here’s the procedure:

1) Go to the Sears “Manage My Home” site, www.managemyhome.com . Create an account and sign in. Screenshot.

2) On the Home menu, choose Home Profile. In the Search Purchase History section, choose Find Your Products. Screenshot.

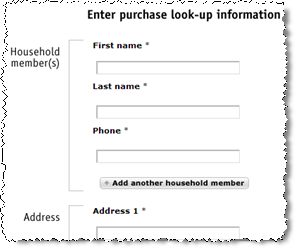

3) Enter the name, phone number, and street address of the customer whose purchases you wish to view. Press Find Products. Screenshot.

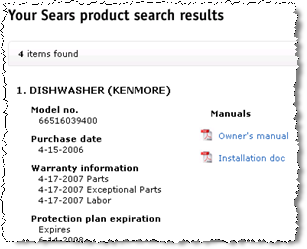

Sears then displays all purchases its database associates with the specific customer — typically major appliances and other large purchases. See examples from Washington, DC, Brookline, Massachusetts, and Lincoln, Massachusetts.

|

|

||

| The information required to retrieve a customer’s purchase history | A customer’s purchase history – showing specific items and purchase dates |

Sears Fails to Protect Customer Information

Sears offers no security whatsoever to prevent a ManageMyHome user from retrieving another person’s purchase history by entering that person’s name, phone number, and address.

To verify a user’s identity, Sears could require information known only to the customer who actually made the prior purchase. For example, Sears could require a code printed on the customer’s receipt, a loyalty card number, the date of purchase, or a portion of the user’s credit card number. But Sears does nothing of the kind. Instead, Sears only requests name, phone number, and address — all information available in any White Pages phone book.

Neither does Sears even include any special instructions or obligations in its signup agreement with users: The ManageMyHome Terms of Use say nothing about what information users may access. Indeed, while Sears includes a small-type link to its Terms of Use, Sears never asks users to affirmatively accept the Terms.

These Disclosures Are Contrary to Sears’s Explicit Promises

Sears violates its privacy policy when it discloses users’ purchases to the general public. The Sears Customer Information Privacy Policy lists specific circumstances in which Sears may share customer information. These circumstances are relatively broad — allowing Sears to share customer data “with members of the Sears family of businesses … to provide … promotional offers that we believe will be of interest.” Disclosures are also permitted “to provide [users] with products or services that [they] have requested,” to “trusted service providers that need access to your information to provide operational or other support services,” to credit bureaus, and to regulatory authorities and law enforcement. But none of these provisions grants Sears the right to share users’ purchases with the general public.

Sears may argue that its web site privacy policy only applies to users’ online purchases, and does not govern purchases made in retail stores. Perhaps. But I doubt in-store customers expect their friends, neighbors, and the general public to be able to find out what they bought. I’m still trying to determine what privacy (if any) Sears promises its in-store customers.

Sears’s Privacy Breach in Context

Sears’s exposure of customer purchase history fits within a long history of unintended web site disclosures. For example, in October 2000 I showed that Buy.com’s return system was revealing customer names, addresses, and phone numbers at publicly-available URLs. But Sears’s disclosure is more troubling: Sears discloses the specific products users purchased. Sears’s disclosures apply to all users, not just those who return products. And Sears’s disclosures come some 7+ years after Buy.com’s breach — a period of great advance in online security.

The combination of data Sears provides could open the door to serious harms to Sears customers. ManageMyHome reports the specific products customers purchased, as well as the dates of each such purchase. With this information, a miscreant could approach a customer and pretend to be a Sears representative. Consider: “Your washing machine was recalled, and I need to install a new motor.” Or, “I’m here to provide the free one-year check-up on your dishwasher.”

The ManageMyHome site offers some useful services: Consolidated information about dates of purchase, clear listing of warranty status, and easy links to product manuals. Sears touted these benefits in its recent coverage of ManageMyHome.

But as soon as Sears resolved to provide online access to customers’ purchase histories, Sears staff should have recognized the need to determine which users are truly authorized to see this information. Sears’s failure to effecitvely authenticate users is therefore puzzling. Did Sears staff fail to notice the problem? Decide to ignore it when they couldn’t devise an easy solution to protect users’ purchase histories? Resolve to argue that purchase history merits no better protection than the current system provides?

Combining this privacy breach with Sears’s poorly-disclosed installation of ComScore tracking software, it appears that Sears is not effectively protecting its users’ and customers’ privacy. Perhaps that’s no surprise in light of Sears’s recent financial distress — a 99% drop in profits in third quarter 2007, compared with the third quarter of 2006. But users need not accept excuses for Sears’s lackadaisical treatment of their private information. No matter the company’s financial standing, Sears ought to comply with its stated privacy policy and treat user information with the care users rightly expect.

I wrote to Sears ManageMyHome via the addresses on their Contact Us page. To their credit, they responded quickly (less than ninety minutes). However, their reply does not address the seriousness of this situation. Their reply follows:

“We appreciate that you have a security concern. Thank you for taking the time to share your comments with us. We appreciate hearing feedback from our customers, and will pass this information to the appropriate area to research.”

Update (January 4, 5pm): Sears has disabled the search feature described above. Attempts to retrieve a purchase history now yield the message “We’re sorry, this feature is currently disabled.”

Thanks to an anonymous contributor, using pseudonym Heather H, for the tip that led to this article.

The Sears "Community" Installation of ComScore

Late last month, Benjamin Googins (a senior researcher in the Anti-Spyware unit at Computer Associates) critiqued a ComScore installation performed by Sears’ “Sears Holdings Community” (“My SHC Community” or “SHC”). After reviewing the installation sequence, Ben concluded that the installation offered “very little mention of software or tracking” and otherwise fell short of CA and industry standards. I agree.

I write today to add my own critique. I begin by presenting the entire installation sequence in screenshots and video. I then explain why the limited notice provided falls far short of the standards the FTC has established. Finally, I show that Sears’ claims of adequate notice are demonstrably false.

The SHC installation proceeds in four steps:

1) An email from Sears after a user provides an address at Sears.com. In seven paragraphs plus a set of bullet points, 582 words in total, the email describes the SHC service in general terms. But the paragraphs’ topic sentences make no mention of any downloadable software, nor do the bullet points offer even a general description of what the software does. The only disclosure of the software’s effects comes midway through the fourth paragraph, where the program is described as “research software [that] will confidentially track your online browsing.” Sophisticated users who notice this text will probably abandon installation and proceed no further. But novices may mistakenly think the tracking is specific to Sears sites: SHC is a research program offered by Sears, so it is difficult to understand why tracking would occur elsewhere. Furthermore, the quoted text appears midway through a paragraph — in no way brought to users’ attention via topic sentences, headings, section formatting, or other labels. So it’s strikingly easy to miss.

2) If a user presses the “Join” button in the email, the user is taken to a SHC web-based installation sequence that further details SHC’s offerings. The first page describes some aspects of SHC in reasonable detail — with six prominent and clear bullet points. Yet nowhere does this text make any mention whatsoever of downloadable software, market research, or other tracking.

3) Pressing “Join” in the SHC screen takes a user to a “Welcome to My SHC Community” page which requests the user’s name, address, and household size. The page then presents a document labeled “Privacy Statement and User License Agreement” — 2,971 words of text, shown in a small scroll box with just ten lines visible, requiring fully 54 on-screen pages to view in full. The initial screen of text is consistent with the “privacy statement” heading: The visible text indicates that the document describes “what information [SHC] gather[s and] how [SHC] use[s] it” — typical subjects for a privacy policy. But despite the title and the first screen of text, the document actually proceeds to an entirely different subject, namely downloadable software and its far-reaching effects: The tenth page admits that the application “monitors all of the Internet behavior that occurs on the computer on which you install the application, including … filling a shopping basket, completing an application form, or checking your … personal financial or health information.” That’s remarkably comprehensive tracking — but mentioned in a disclosure few users are likely to find, since few users will read through to page 10 of the license.

Within the Privacy Statement section, a link labeled “Printable version” offers users a full-screen version of the document, requiring “only” ten on-screen pages on my test PC. But nothing in the Privacy Statement caption or visible text suggests that the document merits such thorough review. Due to the labeling and the first screen of text, few users will see any need to click through to the full-screen version.

4) A user next arrives at a screen labeled “You’re almost finished!” Clicking “Next” triggers an ActiveX screen offering an unnamed program, signed by a company called TMRG, Inc. (nowhere previously mentioned in the installation sequence), authenticated by Thawte (part of VeriSign). Pressing Yes in the ActiveX yields an installation program with no further opportunity to cancel installation. Packet sniffer analysis confirms that ComScore software is installed.

See also a video of the installation sequence.

The FTC’s recent settlements with Direct Revenue and Zango explain the disclosure and consent required before installing tracking software on users’ computers. To install such software on users’ PCs, vendors must obtain “express consent” — defined to require “clear[] and prominent[] disclos[ure of] the material terms of such software … including the nature and purpose of the program and the effects it will have … prior to the display of, and separate from, any final End User License Agreement.” “Clear[] and prominent[]” installations are defined to be those that are “unavoidable”, among other requirements.

The Sears SHC installation of ComScore falls far short of these rules. The limited SHC disclosure provided by email lacks the required specificity as to the nature, purpose, and effects of the ComScore software. Nor is that disclosure “unavoidable,” in that the key text appears midway through a paragraph, without a heading or even a topic sentence to alert users to the important (albeit vague) information that follows.

The disclosure provided within the Privacy Statement and User License Agreement also cannot satisfy the FTC’s requirements. The FTC demands a disclosure “prior to … and separate from” any license agreement, whereas the only disclosure on this page occurs within the license agreement — exactly contrary to FTC instructions. Furthermore, users can easiliy overlook text on page ten of a lengthy license agreement. Such text is the opposite of “unavoidable.”

The SHC/ComScore violation could hardly be simpler. The FTC requires that software makers and distributors provide clear, prominent, unavoidable notice of the key terms. SHC’s installation of ComScore did nothing of the kind.

Other Installation Deficiencies

Beyond the problems set out above, the SHC installation also falls short in other important respects.

Failure to provide the promised additional information. Sears’ initial email promises that “during the registration process, you’ll learn more about this application software.” In fact, no such information is provided in the visible, on-screen installation sequence. Based on this false promise and users’ general experience, users may reasonably expect that the download link in step 4 will offer additional information about the software at issue, along with an opportunity to cancel installation if desired. In fact no such information is ever provided, nor do users have any such opportunity to cancel.

Choosing little-known product names that prevent users from learning more. The initial SHC email refers to the ComScore software as “VoiceFive.” The license agreement refers to the ComScore software as “our application” and “this application” without ever providing the application’s name. The ActiveX prompt gives no product name, and it reports company name “TMRG, Inc.” These conflicting names prevent users from figuring out what software they are asked to accept. Furthermore, none of these names gives users any easy way to determine what the software is or what it does. In contrast, if SHC used the company name “ComScore” or the product name “RelevantKnowledge,” users could run a search at any search engine. These confusing name-changes fit the trend among spyware vendors: Consider Direct Revenue’s dozens of names (AmazingMerchants, BestDeals, Coolshopping, IPInsight, Blackone Data, Tps108, VX2, etc.).

Critiquing Sears SHC’s Response

To my surprise, Sears defends the practices described above. In a reply to CA’s Ben Googins, Sears SHC VP Rob Harles claims that SHC “goes to great lengths to describe the tracking aspect.” In particular, Harles says “[c]lear notice appears in the invitation”, “on the first signup page”, and “in the privacy policy and user licensing agreement.”

I emphatically disagree. The email invitation provides vague notice midway through a lengthy paragraph that, according to its topic sentence, is otherwise about another topic. The first signup page makes no mention at all of any downloadable software. The privacy policy and license agreement describe the software only in the tenth page of text (where few users are likely to find the disclosures), and even then it fails to reference the program by name.

Harles further claims that the installer provides “a progress bar that they [users] can abort.” Again, I disagree. The video and screenshots are unambiguous: The SHC installer shows no progress bar and offers no abort button.

In June 2007, I showed other examples of ComScore software installing without consent — including multiple installations through security exploits. TRUSTe responded by removing ComScore’s RelevantKnowledge from TRUSTe’s Trusted Download Program for three months. Now that more than five months have elapsed, I expect that ComScore is seeking readmission. But the installation shown above stands in stark contrast to TRUSTe Trusted Download rules. See especially the requirement that primary notice be “clear, prominent and unavoidable” (Schedule A, sections 3.(a).(iii) and 1.(hh)).

Why so many problems for ComScore? The basic challenge is that users don’t want ComScore software. ComScore offers users nothing sufficiently valuable to compensate them for the serious privacy invasion ComScore’s software entails. There’s no good reason why users should share such detailed information about their browsing, purchasing, and other online activities. So time and time again, ComScore and its partners resort to trickery (or worse) to get their software onto users’ PCs.

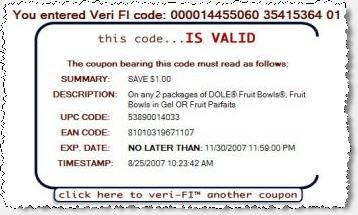

A Closer Look at Coupons.com updated September 24, 2007

I recently examined software from Coupons.com. At first glance their approach seems quite handy. Who could oppose free coupons? But a deeper look reveals troubling behaviors I can’t endorse. This piece summarizes my key concerns:

- Installing with deceptive filenames and registry entries that hinder users’ efforts to fully remove Coupons’ software. Details.

- Failing to remove all Coupons.com components upon a user’s specific request. Details.

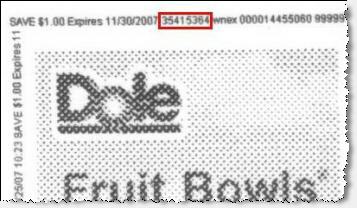

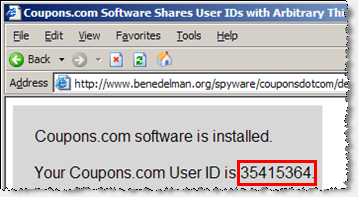

- Assigning each user an ID number, and placing this ID onto each printed coupon, without any meaningful disclosure. Details.

- Allowing third-party web sites to retrieve users’ ID numbers, in violation of Coupons.com’s privacy policy. Details.

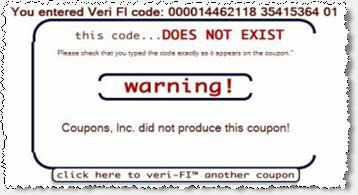

- Allowing any person to check whether a given user has printed a given coupon, in violation of Coupons.com’s privacy policy. Details.

Coupons.com offers users coupons which they can print at home, then redeem at retailers.

Coupons.com specifically promises users that they may "use as many [coupons] as [they] like." But in fact, Coupons.com takes great pains to limit how many coupons users can print. Rather than simply letting users print GIF or JPG coupons from an ordinary web page, Coupons.com requires that users install a coupon-printing ActiveX control. Coupons.com also customizes each coupon with information about who printed it and when. These design decisions increase the complexity of Coupons.com’s business — giving rise to the serious consent and privacy issues set out below.

Installing with deceptive filenames and registry entries

On an ordinary test PC that had never previously run any software from Coupons.com, I installed Coupons.com’s Coupon Bar 5.0 software. I requested a coupon to be printed, then ran an "InCtrl" comparison of changes made to my computer. InCtrl revealed the following new files and registry entries:

c:\windows\uccspecc.sys

c:\windows\WindowsShellOld.Manifest.1

HKEY_LOCAL_MACHINE\SOFTWARE\ClassesManifest.Template.1

HKEY_LOCAL_MACHINE\SOFTWARE\Microsoft\Windows\CurrentVersion\uccspecc

HKEY_LOCAL_MACHINE\SOFTWARE\Microsoft\Windows\CurrentVersion\Controls Folder\Presentation Style